Blog

December 12, 2023

In early 2023 Betadots together with Vox Pupuli adopted the Puppet containers and so this article has been updated to contain the new locations. Details of their adoption can be found here.

If you’ve ever wanted to get started with Puppet or Docker — or both — you’ve probably faced a bit of a conundrum. Should I use Puppet to deploy Docker on my nodes, and then use Puppet to define container images? Or should I use Docker containers to deploy Puppet so I can test dashboards and other modules without having to build out my infrastructure?

In reality, you can do both. Puppet in Docker is a modern way of packaging and releasing Puppet software (including Puppet Server and PuppetDB) using Docker.

In this blog post, I’ll show you how to use some of the Puppet tools to create working containerized environments. You may well try these in development, but I think you’ll quickly see how you can transfer what you learn to production applications.

Back to topWhy Puppet in Docker?

Puppet in Docker is a way to run Puppet and other Puppet software such as Puppet Server and PuppetDB.

By packaging up these components as Docker images we:

- Allow for running a Puppet infrastructure (e.g., Puppet Server, PuppetDB, PostgreSQL) on any host that can run (or only runs) Linux containers.

- Allow for running and scaling the same Puppet infrastructure on top of cluster managers like Kubernetes, Rancher, DC/OS or Docker Swarm.

- Create a great local Puppet development environment with Docker. This can make it much easier to test a new version of Puppet or build something against the PuppetDB API.

Puppet Docker Images: What's Available Today?

Betadots supports the community in building continuous integration (CI) pipelines and adopting container config and offers commercial support. While Vox Pupuli hosts the code with a puppetserver that allows for running Puppet Server (either standalone or with an accompanying PuppetDB) and a PuppetDB image.

The voxpupuli/crafty repository has the compose files while dockerfiles are within the individual container repo. Please do open issues or pull requests with your improvements, issues or suggestions. One reason for releasing images ourselves is to encourage collaboration.

You should see quite verbose output the server containers, showing the Startup of the server and system configuration and the setup of the CA.

Back to topHow to Run Puppet and Docker Containers: A Tutorial

Getting Started: Install Docker With Puppet

Whether you’re just getting started with containers or you’ve been using them for a while, one of the easiest ways to deploy Docker is with the Puppet module.

To deploy Docker on any node managed by your Puppet server, you can simply add the basic class:

include ‘docker’ There are other options for the class, but that will get you started, particularly if you’re looking to do your work on a development virtual machine.

Once deployed to a node, Docker will run and behave just as it would if you installed it manually. Using Puppet to automate this basic step, though, is a great way to deploy as many instances you want, the same way every time.

With Docker installed, you can start running some simple tests, like pulling down the latest version of the Ubuntu image:

$ docker run -it ubuntu:latest /bin/bash Let’s take it a step further by having Docker create a new container with Puppet inside, which can perform any instruction you would pass in a manifest. For example:

$ docker run --name apply-test puppet/puppet-agent apply -e 'file { "/tmp/adhoc": content => "Written by Puppet" }' This will pull the puppet/puppet-agent image from Docker Hub and use Puppet to apply a change, namely creating a file in /tmp/adhoc containing the words, “Written by Puppet.”

If you now run a diff on that container, you’ll see what’s changed from the original image. In this case, upon running, the container created new folders and added content to them, including the /tmp/adhoc file:

root@node02:~# docker diff apply-test

C /etc

C /etc/puppetlabs

C /etc/puppetlabs/puppet

A /etc/puppetlabs/puppet/ssl

A /etc/puppetlabs/puppet/ssl/certificate_requests

A /etc/puppetlabs/puppet/ssl/certs

A /etc/puppetlabs/puppet/ssl/private

A /etc/puppetlabs/puppet/ssl/private_keys

A /etc/puppetlabs/puppet/ssl/public_keys ...

C /tmp

A /tmp/adhoc This is a good way to experiment with and learn Puppet with very little infrastructure or overhead. Instead of building out a full virtual machine and setting it up as a Puppet node, you can use a straightforward Docker command to see how Puppet would make a change or apply some action. It’s fast and you can quickly see your workflows in action. If something breaks, you can just remove the containers and start over.

Run Puppet Server on Top of Docker

You can actually move well beyond launching a handful of containers, and run your Puppet infrastructure on top of a containers-as-a-service platform. This can be accomplished with the puppetserver image, which will deploy a fully functioning Puppet server:

$ docker run --net puppet --name puppet --hostname puppet ghcr.io/voxpupuli/container-puppetserver:v1.0.0-8 In this example, the Puppet server is created in a container called puppet on a Docker network named "puppet." The only piece you really need is:

docker run ghcr.io/voxpupuli/container-puppetserver:v1.0.0-8The other bits allow you to attach your server to a specific network, name the container for easy reuse, and set the hostname so container-based agent nodes can find it.

Compose is a YAML file format that enables you to describe a series of containers in key-value pairs, and define how they should be related and linked. You can manually install docker-compose or install it using the docker::compose class in the docker module mentioned above.

To test the power of Docker Compose, create a compose.yaml a docker-compose.yml file or download the sample from the examples.

The file describes several container images, including puppetserver, postgres, puppetboard and puppet. Puppetboard is a browser-based dashboard component that will become accessible when Docker Compose completes. Ensure you also download the .env file if using the example.

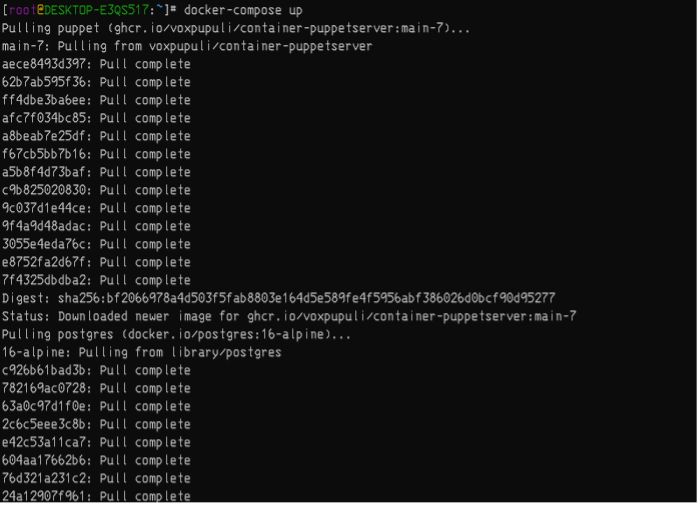

Everything will be pulled, built and booted from a single command executed from the directory where you’ve saved your file:

$ docker-compose up

Figure 1: Several Puppet nodes are deployed as containers using docker-compose

Results

You should now begin to see how the combination of Puppet and Docker gives you new and powerful ways to expand your development environment. Instead of deploying one Puppet node at a time, you can use simple Docker commands to do the work for you. That means you can spend less time building the platform, and more time developing and testing. And because these environments are up and running in minutes, you can deploy as many as you want almost anywhere you want — and easily start over with a clean install every time.

Back to topThanks to the Community for Puppet & Docker

We know this is useful to some already because of a great deal of prior art from within the Puppet community. A simple search on Docker Hub today shows more than 400 Docker images matching the search term Puppet. And there have been several excellent blog posts from people over the past year or so, with instructions and examples of how to roll your own images. We took inspiration from many of these efforts, but struck out on our own for the implementation for a few reasons:

- We want to balance ease of first use with more advanced features. Ultimately, more advanced users can always use the images as a base image to build from, or even take and modify the Dockerfile.

- The best practice around Dockerfiles is still emerging. As well as adhering to some of those practices, we’re looking to push the state of the art a little with the accompanying build toolchain.

- By providing an integrated set of images, we can more easily create full-stack examples, and we can be sure to release updates to the images with the latest Puppet software as it becomes available.

We’re hoping the community will find value in a central set of images aimed at the majority use case.

Back to topWhat’s Next for Puppet & Docker?

This is a new and experimental way of installing and running Puppet, intended mainly for testing and for early adopters. We’re releasing this to the Puppet community in order to get feedback and learn. We hope it's useful now, but software improves with feedback and input from users (that’s you!). We’re interested in the interface, in the defaults chosen, in how you’d like to be able to use these images (but can’t yet). The current release also assumes a level of Docker knowledge; we wonder if there are people interested in some of these capabilities who maybe don’t have, or don’t want, a Docker-centered approach.

It’s worth noting here that the aim isn’t to replace the existing battle-tested packages we already provide, but to supplement them so people working with container-centric infrastructures can still take advantage of all Puppet has to offer. This current release of images focuses on the various open source components, but we’d also love to hear from Puppet Enterprise customers who find this approach compelling.

This is just the start. We have a number of ideas for building on this initial release, as well as building with it. We’re looking forward to seeing what you think.

Try Puppet Today

Not using Puppet Enterprise yet? Get started with your free trial today.

Learn More

- Read about the challenges of container configuration and how to overcome them

- How to install and configure Kubernetes with Puppet

- The benefits of version range support when managing packages with Puppet

- Listen to our podcast to learn more about the future of Kubernetes