Recent Puppet Releases

Recent Puppet Releases

Puppet Enterprise Platform

2025.10 Release Notes 2025.9 Release Notes

Explore Puppet Enterprise Advanced

Starting with the next Puppet Enterprise major version (projected August 2026) Puppet Enterprise will transition from the current “Long Term Support” and “Short Term Support” model (STS/LTS) model to the ‘Latest’ and ‘Latest -1’ support model. For full details, see Puppet Enterprise Platform Support Lifecycle.

Back to topPuppet Enterprise (PE) 2025.10 is now available.

This release ensures consistent behavior when working tasks and plans.

What’s new

- Resolved: An issue affecting the ability to list tasks and plans has been addressed.

Learn more

For a complete list of enhancements, fixes, Puppet-wide agent deprecations, and upgrade notices introduced recently, see the PE 2025.9 Release Notes

2025.9 Release Notes 2023.8.9 Release Notes

Explore Puppet Enterprise Advanced

Starting with the next Puppet Enterprise major version (projected August 2026) Puppet Enterprise will transition from the current “Long Term Support” and “Short Term Support” model (STS/LTS) model to the ‘Latest’ and ‘Latest -1’ support model. For full details, see Puppet Enterprise Platform Support Lifecycle.

We are pleased to announce Puppet Enterprise (PE) 2025.9 and 2023.8.9 are now available.

The latest Puppet Enterprise releases focus on strengthening operational reliability, reinforcing security posture, and giving teams better visibility and control as they manage complex infrastructure environments. Together, these releases allow organizations to move forward with confidence, balancing stability, security, and operational efficiency based on their needs.

Back to topPuppet Enterprise 2025.9

Operate with fewer interruptions

Puppet Enterprise 2025.9 reduces friction in common operational areas so teams can maintain predictable automation, recover more quickly when issues occur, and spend less time diagnosing tooling behavior.

This release helps teams:

- Maintain consistent patching and automation outcomes

- Reduce disruption during maintenance windows

- Keep systems available, compliant, and under control

Greater consistency and visibility in Advanced Patching:

Improvements to advanced patching workflows provide clearer feedback when patch group creation fails, add retry support when issues occur, and introduce real time visibility into patching progress.

Teams can now monitor patch execution as it happens, adjust patching strategies as environments change, and maintain momentum during maintenance windows without unnecessary guesswork.

More reliable and manageable automation execution:

Teams can now edit workflows or stop running workflows directly from the Puppet Enterprise console or via equivalent APIs. This gives operators greater control when conditions change and helps reduce the impact of long running or misconfigured automation.

In addition, fixes to Infra Assistant plan execution and browser certificate handling improve consistency across environments.

Strengthened security and platform foundations

Puppet Enterprise 2025.9 reinforces the underlying platform so it remains a trusted part of security and compliance sensitive environments.

Key outcomes include:

Reduced exposure to known vulnerabilities

This release includes security fixes across core components, helping organizations lower risk associated with outdated dependencies.

Support for modern operating systems

Expanded agent support, including Debian 13 on amd64 and aarch64, helps teams stay aligned with current platform standards.

Simpler, more maintainable integrations

The removal of deprecated metrics APIs reduces long term maintenance overhead and encourages use of supported interfaces.

Faster orchestration

Performance improvements were made to the orchestrator, improving responsiveness when selecting tasks and plans in the PE console and when running individual tasks and plans.

Back to topPuppet Enterprise 2023.8.9

Security maintenance with minimal operational change

Puppet Enterprise 2023.8.9 is a maintenance focused release designed for organizations that prioritize stability while remaining on a supported version of Puppet Enterprise.

This release helps teams:

- Address known security vulnerabilities without introducing new operational complexity

- Maintain compatibility with modern operating systems

- Remain aligned with supported versions of Puppet Enterprise

Key outcomes include:

Complete security remediation

This release includes the same set of security fixes as newer PE versions, allowing teams to reduce exposure to known vulnerabilities while maintaining a conservative operational posture.

Continued support for modern agents

Agent support for Debian 13 on amd64 and aarch64 ensures compatibility with current operating systems while remaining on the 2023.8 release line.

Back to topWhy upgrade?

Upgrading to the latest supported Puppet Enterprise releases helps organizations:

- Reduce operational risk through more predictable patching and automation workflows

- Improve visibility and control across maintenance and automation activities

- Strengthen security posture with fixes for known vulnerabilities

- Align infrastructure automation with modern operating systems and environments

- Maintain compliance by staying on supported versions of the platform

Learn more

For a complete list of enhancements, fixes, Puppet-wide agent deprecations, and upgrade notices, see:

2025.8 Release Notes2023.8.8 Release Notes

Explore Puppet Enterprise Advanced

Starting in August 2026, Puppet is transitioning its Puppet Enterprise® software support offerings from the “Long Term Support” and “Short Term Support” model to the "Latest" and "Latest - 1" model. See release notes for important information on how this may impact you.

We are pleased to announce Puppet Enterprise (PE) 2025.8 and 2023.8.8 are now available.

The latest Puppet Enterprise releases strengthen operational reliability and reinforce your security posture, helping teams stay confident and productive as they manage complex infrastructure environments.

Greater consistency in Advanced Patching

PE 2025.8 resolves an issue that could interrupt patching workflows when nodes were removed from all patch groups. With this fix, teams can maintain predictable Puppet runs and avoid unnecessary troubleshooting so they can focus on higher value work.

Strengthened security with a complete CVE fix

Both PE 2025.8 and PE 2023.8.8 include the full resolution for CVE-2025-58767, ensuring your Puppet Server dependencies are up to date and secure. This gives enterprises the assurance that their automation foundation remains protected against known vulnerabilities.

Learn more

See the full technical details in the release notes:

PE 2025.8 Release Notes PE 2023.8.8 Release Notes

2023.8.7 Release Notes2025.7 Release Notes

Explore Puppet Enterprise Advanced

Starting in August 2026, Puppet is transitioning its Puppet Enterprise® software support offerings from the “Long Term Support” and “Short Term Support” model to the "Latest" and "Latest - 1" model. See release notes for important information on how this may impact you. Until the transition, PE and Puppet Enterprise Agent (PEA) entries will continue to be updated regularly.

We are excited to announce Puppet Enterprise (PE) 2025.7 and Puppet 2023.8.7 are now available, delivering meaningful improvements in automation productivity, operational reliability, and security. Together, these releases help teams modernize infrastructure operations while maintaining the stability and predictability required in enterprise environments.

Accelerate automation with AI assisted workflows

New in PE 2025.7

PE 2025.7 introduces new enhancements to Infra Assistant – an exclusive capability of Puppet Enterprise Advanced - designed to help teams build and evolve automation more efficiently. Natural language assisted workflows for Puppet code, tasks, and plans reduce time to value, streamline collaboration, and lower the barrier to scaling automation across teams. Performance and setup improvements further support faster, smoother daily interactions.

Greater control and insight for patching operations

Enhanced in PE 2025.7

Enhancements to Advanced Patching in Puppet Enterprise Advanced give teams clearer visibility and oversight into patching activities, helping reduce operational risk and improve confidence in patch execution. Key improvements include:

- Simpler patch group management, making it easier to adapt patching strategies as environments change

- Clearer visibility into patch schedules and run status, helping teams quickly understand what is patched and what still needs attention

- Proactive alerting for patching activity, enabling faster response to issues that could impact availability or compliance

These updates support more predictable patching outcomes and help teams maintain security and compliance with less manual effort.

Expanded platform support for modern environments

Available in both 2025.7 and 2023.8.7

Both releases extend support for newer operating systems, including Red Hat Enterprise Linux 10. This allows organizations to modernize infrastructure while continuing to rely on Puppet Enterprise for consistent automation and governance across mixed environments.

Stronger Security Posture

Delivered in both 2025.7 and 2023.8.7

Both releases incorporate multiple security updates and defect fixes across Puppet Enterprise, agents, and supporting services. These improvements help reduce exposure to known vulnerabilities, strengthen overall platform stability, and reinforce Puppet Enterprise as a trusted foundation for managing critical infrastructure.

Why Upgrade?

- Move faster with AI assisted automation

- Expand your deployment options with RHEL 10 support

- Reduce operational risk with more resilient backups

- Safeguard your infrastructure with critical security fixes

Learn more

For full technical details and a complete list of enhancements and fixes, see:

2023.8.6 release notes 2025.6 release notes

Explore Puppet Enterprise Advanced

Puppet Enterprise (PE) 2023.8.6 and 2025.6 (PE and PE Advanced) are here!

We are excited to announce that Puppet Enterprise (PE) 2023.8.6 and 2025.6 (PE and PE Advanced) are now available.

PE 2025.6 incorporates support for Puppet Edge, a powerful new capability for Puppet Core and the Puppet Enterprise platform that extends governance to network and edge devices, including firewalls, routers, switches, and POS systems. Valuable enhancements are also made to Advanced Patching, available exclusively in PE Advanced.

Both PE 2025.6 and PE 2023.8.6 receive more than 50 important security and bug fixes and removal of support for several unsupported operating systems.

Back to topNew in 2025.6

Extending Puppet to the Edge

Puppet Edge addresses the risk of unmanaged network devices with agentless, task-based orchestration, complementing existing desired state automation for servers and delivering flexibility without compromising compliance. Teams can now manage their full infrastructure estate more easily without having to maintain and operate multiple tools.

With features like Playbook Runner that retains value from prior automation investments, consistent policy management across distributed systems, embedded visibility into device state, and seamless integration with Puppet workflows, organizations can apply the same trusted automation and governance used for servers to edge environments – achieving broader consistency and control across hybrid estates.

Teams running Puppet Enterprise Advanced gain additional capabilities, including advanced orchestration workflows from the PE console and AI-generation of content to help manage devices using the new Infra Assistant: code assist capability.

Advanced Patching: New PE console capabilities

From the PE console, Advanced Patching users can:

- Edit a maintenance window

- Edit a blackout window

- Edit scheduled patch jobs

Advanced Patching API: New endpoints

New API endpoints are included to:

- Edit an existing maintenance window

- Edit an existing blackout window.

- Edit an existing patch job.

Resolved issues

The Puppet team is committed to resolving issues and this release incorporates fixes for the following issues (refer to release notes for additional details)

PE 2023.8.6 and PE 2025.6

- Puppet backup create/restore no longer fails when the puppet_enterprise::puppetdb_port is changed

- Puppet::Network::Format error message is no longer missing actionable details

PE 2025.6 only

- Infra Assistant no longer fails to start up when replication is enabled

- Advanced Patching no longer fails to startup when replication is enabled

- Tasks and plans that are not intended to be run directly by users are no longer displayed

- Plans fail to submit results if tasks are still running

Deprecations and removals

Agent platforms removed

Support is removed in both 2023.8.6 and 2025.6 for the following operating system platforms:

- macOS 11 x86_64

- macOS 12 x86_64

- macOS 12 ARM

Client tool platforms removed

Support is removed in both 2023.8.6 and 2025.6 for the following client tool platforms:

- macOS 11 x86_64

- macOS 12 x86_64

- macOS 12 ARM

- macOS 12 M1

- macOS 12 M2

PE console button removed

In 2025.6, following the availability of Puppet Edge, the button to create network devices has been removed in the PE console to simplify the user interface.

Back to topSecurity fixes

It is important to leverage supported releases of software to ensure protection against an ever-evolving threat landscape. As listed in the respective release notes, 2023.8.6 and 2025.6 each incorporate fixes for more than 50 CVEs.

August 5th 2025

We are excited to announce that Puppet Enterprise (PE) 2023.8.5 and 2025.5 (PE and PE Advanced) are now available, including new platform support, as well usability enhancements, security improvements, and other important fixes.

Benefits of upgrading to PE 2025.5:

- Rerun plans from the PE Console

- Various bug fixes and security enhancements

Benefits of upgrading to PE 2025.5 and 2023.8.5:

- Filter plans by name in the PE Console

- Improved file sync configuration parameters

- Agent platform support for Red Hat Enterprise Linux (RHEL) 10 x86_64

- Security, reliability and performance enhancements

For full details of what’s included in each release, see the PE docs:

IMPORTANT: Upcoming Changes to the Puppet Enterprise Lifecycle

Beginning in August 2026, Puppet Enterprise will adopt a new “Latest and Latest-1” support model.

This change will accelerate innovation and simplify lifecycle management by:

- Delivering continuous access to valuable new features

- Improving security and compliance through more frequent updates and patches

- Providing a more predictable, simplified support timeline

Under the new model:

- Latest series: Receives full support (new features, fixes, security updates) for 12 months.

- Latest-1 series: Receives defect fixes and only minor changes for an additional 12 months after being superseded.

This replaces the previous Long-Term Support (LTS) model, which offered up to two years of support with limited feature delivery.

How does this impact current release streams?

- PE 2023.8.z series (LTS) will be the final series supported under the LTS support model. Maintenance releases will continue until August 2026, when the series reaches end of life (EOL). This timing coincides with the launch of the new lifecycle model. Customers should begin planning upgrades to ensure uninterrupted support.

- PE 2025.y series (Current latest): This series will continue receiving the latest updates until August 2026, when the next major PE version is released. At that point, 2025.y will transition to Latest-1 and receive defect fixes and only minor updates until EOL in August 2027.

Further guidance will be provided ahead of the transition in August 2026 but we recommend that planning start now to ensure a smooth transition and to gain from the latest capabilities and associated business outcomes.

Release Notes Learn More About Puppet Enterprise Advanced

June 2025

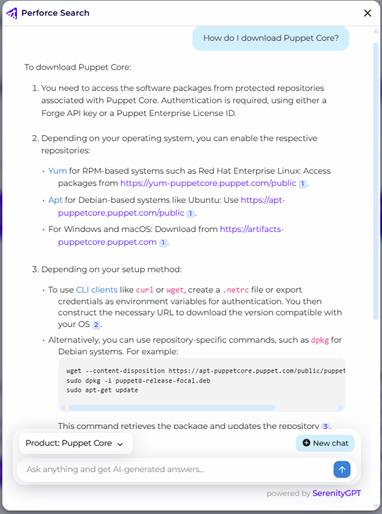

Time spent finding the right information, knowing which services to use, and reliance on Puppet experts slows velocity and creates a productivity bottleneck for infrastructure teams. Infra Assistant, new in PE 2025.4 and exclusive to Puppet Enterprise (PE) Advanced, transforms how teams interact with Puppet by enabling you to talk to your Puppet infrastructure using natural language.

This release also features updates to Advanced Patching as well as usability enhancements, fixes, and expanded platform support.

Back to topNew in this release

Introducing Puppet Infra Assistant (exclusive to PE Advanced)

Infra Assistant, available within the Puppet Enterprise console, safely unlocks infrastructure data and services for everyone — not just Puppet power users. Authorized users can now safely access real-time infrastructure data more easily to make faster, data-driven decisions with confidence.

Customers with PE Advanced licenses are entitled to activate Infra Assistant today. For information about enabling the Infra Assistant, see the docs here. To upgrade to the PE Advanced license, contact our sales team.

Infra Assistant is the first of several exciting AI-powered capabilities we have planned, including agentic code generation and anomaly detection.

Back to topImprovements in this release

Enhancements to Advanced Patching (exclusive to PE Advanced)

In addition to general usability improvements within the Advanced Patching feature, this release introduces support for creating patch groups in the console with fact-based rules for dynamic membership.

Previously, the PE console supported creating patch groups with pinned nodes, while dynamic membership (using fact-based rules) was available only via API.

You can now create patch groups in the console with pinned membership, or using fact-based rules for dynamic membership, or both. At patch job runtime, nodes that match your defined rules, and that aren’t already pinned to another patch group, are included automatically. This update simplifies patching workflows and reduces manual effort.

Back to topUpdates in this release

Updated agent support (PE and PE Advanced)

This release adds agent support for the following operating systems:

- macOS 15 x86_64

- Solaris10 SPARC

- SUSE Linux Enterprise Server (SLES) 15 ppc64

2023.8.3 release notes 2025.3 release notes

Learn More About Puppet Enterprise Advanced

We are excited to announce that Puppet Enterprise (PE) 2023.8.3 and 2025.3 (PE and PE Advanced) are now available.

We’ve expanded primary and agent platform support, as well as incorporated improvements in the popular Advanced Patching with Vulnerability Remediation capabilities introduced exclusively in Puppet Enterprise Advanced. Patching infrastructure in a timely manner remains one of the most important defensive tactics that platform teams can leverage to prevent unplanned outages and compromise. Advanced Patching distributes patches across the infrastructure estate quickly and efficiently at scale.

Benefits of upgrading to PE Advanced 2025.3:

- A variety of usability improvements and bug fixes have been made to Advanced Patching and Vulnerability Remediation

Benefits of upgrading to PE 2025.3 and 2023.8.3:

- Primary platform support for Amazon Linux 2023 has been added

- Agent platform support for Amazon Linux 2023 (FIPS) and Microsoft Windows Server 2025 has been added

- Security and performance enhancements

Release Notes Learn more About Puppet Enterprise Advanced

March 2025

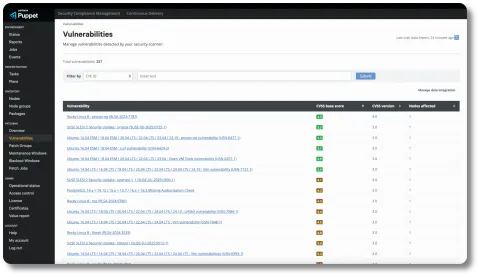

Unresolved vulnerabilities can lead to costly consequences, especially for enterprise organizations where continuous compliance is critical. Vulnerability Remediation, new in PE 2025.2.0 and exclusive to Puppet Enterprise (PE) Advanced, reduces the mean time to remediate vulnerabilities, empowering cross-team collaborative workflows for security and IT operations, ensuring your systems remain secure and compliant.

Also included in this release are improvements to the recently introduced Advanced Patching feature and new agent support for the wider Puppet Enterprise platform.

Back to topNew in this release

Introducing Vulnerability Remediation (exclusive to PE Advanced)

With an ever-increasing volume of common vulnerability and exposures being reported, it is critical to ensure that patches are applied quickly and efficiently. The addition of Vulnerability Remediation within the Advanced Patching feature in the PE console streamlines vulnerability management for enterprise security, enabling:

- Powerful integration: Ingest data from popular security scanners to review vulnerability information, sort by criticality, and apply remediation quickly and easily right from within Puppet. Nessus is integrated out-of-the-box with other scanners supported via an extensible architecture and open API framework.

- Greater collaboration: Reduce mean time to remediate (MTTR) with more efficient information exchange between platform and security teams. (No more clumsy spreadsheets and endless fire drills!)

- Improved resilience: Apply or roll back patches, reconfigure applications, or upgrade to new versions based on CVSS criticality scores for each vulnerability.

Improvements in this release

Enhancements to Advanced Patching (exclusive to PE Advanced)

This release also includes updates to the Advanced Patching feature in PE Advanced, including:

- Comprehensive patch management: Redefine patch groups, configure scheduled patch jobs, and view vulnerability data at the node level.

- Improved scheduling control: Manage existing maintenance schedules and get warnings about potential scheduling overlaps when configuring patch jobs.

- Dynamic patch grouping: Group nodes automatically based on specific criteria defined by your infrastructure needs and patch management process.

- Improved usability: General improvements address bugs and usability issues from previous releases.

Updates in this release

Updated agent support (PE and PE Advanced)

- This release adds agent support for the following operating systems:

- macOS 15 ARM

- Fedora 41 x86_64

- Microsoft Windows Server 2016 FIPS

With the addition of another exclusive, value-added capability, Puppet Enterprise Advanced remains the best way to get the most out of your infrastructure with Puppet. Advanced Patching delivers more value for Puppet Enterprise Advanced users, giving them access to a brand-new approach to patching.

LEARN MORE ABOUT PUPPET ENTERPRISE ADVANCED

Back to topAdvanced Patching

The Advanced Patching module, available exclusively to Puppet Enterprise Advanced customers, lets Puppet admins continuously manage their patching workflows more easily. Features in this initial release include:

- A graphical user interface (GUI) for ease of use

- Dedicated patching groups for nodes

- Support for maintenance and blackout windows

- Fine grained Role-Based Access Control (RBAC)

These features are included alongside the familiar Puppet workflows that you already love.

The initial release of Advanced Patching will receive numerous enhancements throughout 2025.

Advanced Patching is available immediately to Puppet Enterprise Advanced users. At this point, these capabilities cannot be bought individually or applied to other Puppet entitlements and are only available to Puppet Enterprise Advanced customers.

PUPPET ENTERPRISE ADVANCED DEMO

September 19, 2024

With the addition of two exclusive, value-adding capabilities, Puppet Enterprise Advanced remains the best way to get the most out of your infrastructure with Puppet. This simultaneous release of the Observability Data Connector and the enhanced ServiceNow Spoke delivers more value for Puppet Enterprise Advanced users, giving them access to improved monitoring and resource optimization, faster issue resolution, predefined and custom self-service automation workflows, and more.

Back to topFor more information on adding these capabilities to your Puppet Enterprise entitlement, visit the Puppet Enterprise Advanced product page or compare plans on the Puppet Pricing & Plans page.

Observability Data Connector

The Observability Data Connector module, available exclusively to Puppet Enterprise Advanced customers, lets Puppet admins skip the wait and see specific events as they happen. Import key Puppet data into your preferred observability platforms — like Splunk, Data Dog, New Relic, Prometheus, and Grafana — instead of adding yet another tool to their tech stack. Importable data includes:

- Puppet event totals: Shows how many of each event type happened during a report from changed resources to failed resources. Users can use this data to look for issues, identify anomalies, and confirm change consistency between states, environments, and resources.

- Puppet catalog times: Shows the timing of each stage of a Puppet catalog application. This function allows you to see which stages of your catalog are taking the most time so you can identify coding issues early and better manage infrastructure capacity.

Self-Service Automation via the ServiceNow Spoke

The Puppet Spoke for ServiceNow gives platform teams the power to automate without leaving ServiceNow, one of the most popular IT service management platforms (ITSMs) on the market. Select from a number of templated items or create custom automation workflows for your whole team and execute Puppet Tasks and Plans with a click.

This exclusive feature empowers platform teams using Puppet Enterprise Advanced to manage IT infrastructure changes efficiently, troubleshoot problems quickly, and resolve more incidents faster. With the Puppet ServiceNow Spoke, teams have access to:

- Pre-built ServiceNow catalog items: This integration provides a set of pre-defined workflows in the ServiceNow catalog. These template items include routine Puppet tasks like installing the Puppet agent on a node, rebooting a machine, running a package install, managing a service, and more.

- Custom ServiceNow catalog items: With the Puppet Spoke, your teams can also make custom Puppet workflows accessible to non-experts for easy, self-service consumption without needing to log into the Puppet Enterprise console.

The Data Connector and the ServiceNow Spoke are available immediately to Puppet Enterprise Advanced users. At this point, these capabilities cannot be bought individually or applied to other Puppet entitlements, and are only available to Puppet Enterprise Advanced customers.

2023.8.0 LTS RELEASE NOTES 2021.7.9 LTS RELEASE NOTES

DEMO PUPPET ENTERPRISE

August 2024

Back to topPuppet Enterprise 2023.8.0 LTS

The first LTS release built on Puppet 8, 2023.8.0 LTS is designed to support your long-term success with Puppet, featuring improvements to performance, scalability, and security to help your teams automate and manage infrastructure more efficiently.

Whether upgrading from 2021.x LTS or implementing Puppet Enterprise for the first time, Puppet Enterprise 2023 LTS is your gateway to a more robust and secure infrastructure.

Puppet Enterprise 2023.8.0 LTS is the inaugural release of the 2023.x LTS track, which will replace the 2021.x LTS release track after 2021.7.9.

Back to topPuppet Enterprise 2021.7.9 LTS

Alongside the release of our 2023.8.0 LTS, we are also releasing the final version of the 2021.x LTS release track with Puppet Enterprise 2021.7.9. While this version will get security updates and remain supported for the next 6 months, this will be the last major update for this version. In future releases of Puppet Enterprise LTS, the 2021.x LTS release track will be replaced by the 2023.x LTS release track.

In order to continue using the latest version of Puppet Enterprise LTS beyond 2021.7.9, you will need to upgrade to the 2023.x release track.

For information about upgrading to Puppet Enterprise 2023 LTS, see the Puppet documentation on upgrading Puppet Enterprise.

Back to topNew in Puppet Enterprise 2023.8.0 LTS:

- Puppet 8: This release is built on top of Puppet 8, and enhances automation capabilities, offering improved efficiency and security in managing your infrastructure. A complete list of features and improvements contained in Puppet 8 can be found here.

- Improved performance and scalability: Various improvements have been made to our components and services to help improve operational performance and scalability. These include improvements to lockless deploys for Code Manager, PuppetDB, and Orchestrator.

- Improved DR capabilities: We understand the critical nature of maintaining business continuity and have improved the resiliency of promotion and provision actions to help support better disaster recovery processes.

- Updated agent support: This release also adds agent support for the following operating systems:

- Ubuntu 24.04 (amd64 and aarch64)

- RedHat Enterprise Linux 9 ppc64le

- Alma Linux 9 (x86_64 and aarch64)

- Rock Linux 9 (x86_64 and aarch64)

- Amazon Linux 2 (aarch64)

- Fedora 40 (x86_64)

Issues resolved in Puppet Enterprise 2023.8.0 LTS:

This release also contains a variety of bug fixes alongside dependency and security updates. A complete list of changes can be found in the 2023.8.0 release notes here.

EOL for Several Primary Server Platforms

In Puppet Enterprise 2023.8.0 LTS, we've removed support for the following End of Life (EOL) operating systems as infrastructure servers (Primary, Replica, Compiler, or Database) platforms:

- CentOS version 7

- Oracle Linux version 7

- Red Hat Enterprise Linux version 7

- Red Hat Enterprise Linux (FIPS 140-2 compliant) version 7

- Scientific Linux version 7

- Ubuntu 18.04

- SuSE Linux Enterprise Server (SLES) 12

New in Puppet Enterprise 2021.7.9 LTS:

- Legacy facts disabled by default: This ensures that your code base is compatible with Puppet 8 and that there are no issues or blockers with your upgrade path.

- Disabling slow and I/O intensive operation codedirs chown in compiler catalogs.

- Faster Events screen load in the Puppet Enterprise console shows events in the last 30 minutes.

- Updated agent support: This release also adds agent support for the following operating systems:

- Ubuntu 24.04 (amd64 and aarch64)

- RedHat Enterprise Linux 9 ppc64le

- Alma Linux 9 (x86_64 and aarch64)

- Rock Linux 9 (x86_64 and aarch64)

- Amazon Linux 2 (aarch64)

- Fedora 40 (x86_64)

May 21, 2024

In today’s operating reality, organizations need more value from the tools they’re already using. This release adds more features and functionality to Puppet Enterprise to help users do more with their infrastructure, faster and more securely.

With the release of Puppet Enterprise 2023.7, the inclusion of Continuous Delivery lets customers build, test, and deploy Puppet code. Security Compliance Management provides compliance scanning, assessment, and monitoring capabilities within Puppet, using Puppet infrastructure as code to measure security and compliance posture against custom policies and the integrated CIS-CAT® Pro Assessor for maximum compliance visibility.

Additionally, Puppet Enterprise 2023.7 adds previews of premium features to the Puppet Enterprise Console. Get a sneak peek at Impact Analysis (which lets you preview the impact of your next code change on existing configurations before you merge) and Security Compliance Enforcement (policy as code for correcting drift and enforcing desired state configurations hardened against CIS Benchmarks and DISA STIGs) and learn more with new links in the Puppet Enterprise Console.

Puppet Enterprise 2023.7 also includes additional agent support, bug fixes, and security updates to ensure smoother operations, consistent infrastructure uptime, and maximum usability of Puppet Enterprise.

Back to topNew in this release:

- Security Compliance Management is now included with Puppet Enterprise, adding compliance monitoring and assessment insights as part of the same Puppet Enterprise license.

- Continuous Delivery is now included with Puppet Enterprise, adding CI/CD capabilities that let you build, test, promote, and deploy Puppet code and integrate with your current pipelines as part of the same Puppet Enterprise license.

- Security Compliance Management and Continuous Delivery can be accessed from Puppet Enterprise. Users can launch the new consoles by clicking quick links in the Puppet Enterprise console.

- Added agent support for the following operating systems:

- Amazon Linux 2023 amd64

- Amazon Linux 2023 aarch64

- Debian 11 aarch64

- Debian 12 amd64

- Debian 12 aarch64

- macOS 14 ARM

- macOS 14 x86_64

- FIPS 140-2 compliant Red Hat Enterprise Linux (RHEL) 9 x86_64

Issues resolved in this release:

- Fixed an issue where promoting a replica using the disaster recovery workflow could lead to file sync corruption and code deployment failures.

- Fixed an issue where the

recover_configurationcron job could sometimes cause a Puppet server restart. - Included REXML Ruby gem, correcting issues for modules reliant on XML interactions with the REXML library.

- Fixed an issue where pinning a node resulted in the pinned node being incorrectly displayed in the main rules section when a node group was set to match any rule.

- Added use of the full Puppet binary path for backup and restore commands, eliminating certain failures during backup commands (e.g., running the backup command from a cron job).

Security fixes in this release:

- Addressed the following CVEs:

- CVE-2024-22871

- CVE-2024-1597

- CVE-2024-25710

- CVE-2024-26308

- CVE-2023-42503

- CVE-2024-46218

STS RELEASE NOTES LTS RELEASE NOTES DEMO PUPPET

Feb. 6, 2024

You rely on your infrastructure to drive performance, security, and time to value. Puppet Enterprise 2023.6 (STS) and 2021.7.7 (LTS) focus on performance to deliver faster, more efficient automation workflows with a host of updates and enhancements.

Puppet users can expect enhanced increased responsiveness that enables them to meet the demands of modern IT operations faster than before. These releases also improve scalability to ensure that automation remains robust and reliable, even in large IT environments increasing the number of concurrent plans that can run.

Updates in these releases:

PE 2023.6 (STS)

- Identify operational issues affecting infrastructure nodes: The PE console now includes an Operational status page showing the result of the latest checks performed by the

pe_status_checkmodule. Issues requiring your attention are listed under the affected infrastructure nodes. - Run 100+ plans concurrently: PE 2023.6 introduces the

pe-plan-runnerservice, which runs on the primary server by default, allowing concurrent execution of up to 100 plans.pe-plan-runneralso has the potential for further scaling based on available memory on the primary server. - Include or exclude catalog resource edges in catalogs sent to PuppetDB.

- A new guided workflow for configuring and running task jobs.

- Specify ciphers to use when establishing connections to configured LDAP servers.

- Added agent support for AIX 7.3.

PE 2021.7.7 (LTS)

- Added agent support for AIX 7.3.

Issues resolved in these releases:

PE 2023.6 (STS)

- Fixed issues where restoring PE from a backup could fail when

puppetagent was running, if lockless code deployments were enabled, or when setting theclassifier_hostparameter. - Fixed an issue where upgrading the agent to Puppet 8 on FIPS-compliant RHEL 7 or 8 could cause the puppet service to stop unexpectedly.

PE 2021.7.7 (LTS)

- Fixed issues where restoring PE from a backup could fail when puppet agent was running, if lockless code deployments were enabled, or when setting the

classifier_hostparameter.

Security fixes in these releases:

PE 2023.6 (STS) & PE 2021.7.7 (LTS)

- Critical updates to Java, Postgres, and logback.

- Addressing CVE-2023-6378, CVE-2023-6378, CVE-2023-40167, CVE-2023-36479, CVE-2023-41900, CVE-2023-5869, CVE-2024-20952, CVE-2024-20918, CVE-2023-44487, CVE-2023-5072, CVE-2024-20932

STS RELEASE NOTES LTS RELEASE NOTES DEMO PE 2023.4

STS: Oct. 3, 2023

LTS: Sept. 2023

Your increasingly complex IT environment needs to move faster than ever, so we’ve updated Puppet to ensure your IT operations teams can keep pace to drive better performance and scale your enterprise infrastructure. The 2023.4 release of our leading-edge PE release stream (also referred to as STS) includes powerful new improvements that will help you increase operational efficiency by maximizing resources and streamlining processes.

New in these releases:

PE 2023.4 (STS)

- Puppet 8: PE 2023.4 includes Puppet 8, the eighth full release of Puppet’s open source code. Puppet 8 includes updates to configuration reporting, protections for user inputs, and more. Additionally, access to the latest OS versions like OpenSSL 3 and Ruby 3.2 will improve performance and add additional security.

- Effortless certificates management: Auto-renewal alleviates the challenge of manually reinstating expired certificates across your IT.

- Orchestrator enhancements: Improvements to the Orchestrator constrain task concurrency to individual jobs rather than sharing across jobs, allowing independent jobs to start execution without latency.

- PuppetDB efficiency improvements: PuppetDB will make sure reports run smarter with optimized indexes, increasing speed and efficiency.

- New OS support for primary and secondary: MacOS 13 (ARM and x86_64) Agents, Ubuntu 22.04 & RHEL 9 Primary

- Enhanced UI: Edit scheduled plans effortlessly with a simplified interface.

- More enhancements: This release includes several changes designed to increase efficiency and usability.

PE 2021.7.5 (LTS)

- Classifier service flags unmappable legacy facts in node group rules: PE 2021.7.5 generates warning messages to flag uses of certain legacy facts that don’t map to equivalent structured facts in Puppet 8.

- New OS support for primary and secondary: MacOS 13 (ARM and x86_64) Agents, Ubuntu 22.04 and RHEL 9 Primary

- Customizable HTTP-client limits in Orchestrator: Specify connection limit parameters to match infrastructure requirements.

- Configurable socket timeout in Orchestrator: Specify the maximum time before socket timeout by changing the default value.

- Improved error logging for the puppet backup command: In PE 2021.7.5, descriptive error messages will display in the terminal and log file, instead of generic messages displayed in previous releases.

- Added primary server platforms: RHEL 9 x86_64, Ubuntu 22.04 amd64

- Added agent platforms: macOS 13 ARM and x86_64

- Added client tools platforms: macOS 13 ARM and x86_64

- Added patch management platforms: RHEL 9 x86_64

- Removed agent platforms: CentOS 7 aarch64, macOS 10.15, Oracle Linux 7 aarch64, Red Hat 7 aarch64, Scientific Linux 7 aarch64

- Removed client tool platform: macOS 10.15

Issues resolved in these releases:

PE 2023.4 (STS)

- Fixed an issue in which installing a Windows agent through the console could fail when the Test Connections checkbox was selected.

PE 2021.7.5 (LTS)

- Fixed an issue in which installing a Windows agent through the console could fail when the Test Connections checkbox was selected.

Security fixes in these releases:

PE 2023.4

- Addressed CVE-2023-5255.

PE 2023.1 RELEASE NOTES PE 2021.7.3 RELEASE NOTES

These patch releases include fixes and performance upgrades for existing features.

These releases represent the latest updates in the Puppet Enterprise (PE) 2023 and 2021 streams, following the releases of PE 2023.0 and PE 2021.7.2 LTS in January 2023. These new, backward-compatible releases contain fixes and performance upgrades for existing features and functionality.

For a detailed list of enhancements and fixes in PE 2023.1, see the PE 2023.1 release notes.

For a detailed list of enhancements and fixes in PE 2021.7.3, see the PE 2021.7.3 release notes.

For security and vulnerability announcements, see CVE Content.

Performance enhancements in these releases:

- Improved performance when querying PuppetDB

- Improved performance for several functions in the Puppet language

- More reliable warnings when updating Puppet Server

Deprecation of Pure JavaScript Open Notation (PSON) for serializing data in Puppet 7

Resolved issues in these releases:

- Tasks page is available following a software update

- Enabling the lockless code deploy feature no longer causes performance issues in PuppetDB catalog compilation

- Performance issue with Puppet agent runtimes is resolved

- Certificates and keys can be backed up and restored by specifying the certs scope

- Updates implemented to help users enter valid URLs

- Timeouts can be specified for SAML authentication

- User-defined temporary directory is honored during PE restore operations

- Issue that caused an unexpected increase in CPU usage is resolved

Additional issues resolved in PE 2023.1, related to new features in 2023.0:

- Scheduled task jobs run successfully without a defined timeout

- Timeout and concurrency values for scheduled tasks can be viewed and edited in the console

- When tasks are rerun in the console, timeout and concurrency attributes are preserved

- Access rights for remote users can be revoked and reinstated from the console

Security fixes in both releases:

- CVE-2023-1894

- CVE-2023-26048

RELEASE NOTES UPGRADE START PE TRIAL

April 2023

Puppet powers better infrastructure in thousands of organizations worldwide – and changes to Puppet source code always target better efficiency, scalability, and usability. Puppet 8 is the biggest update to Puppet since Puppet 7’s first release in November 2020. Along with a host of behind-the-scenes updates, Puppet 8 adds brand-new features that ensure better security, reliability, and performance, like automatic certificate renewal and an error-proof Strict Mode.

Puppet 8 is included in all Puppet Enterprise releases since PE 2023.4 (STS) and PE 2021.7.5 (LTS).

New in this release:

- Updates to certificate management: Automatic certificate renewal addresses a huge IT ops pain point. With customizable automatic certificate management, Puppet 8 reduces the toil of managing certificates across large fleets, enabling faster security fixes and continuous compliance across your infrastructure.

- Ruby 3.2 and OpenSSL 3: With Puppet 8, Puppet is now on the latest branch of Ruby 3.2 and OpenSSL 3, reducing vulnerability scanning concerns.

- Strict Mode: Puppet 8 introduces Strict Mode, which throws an error rather than allowing incorrectly passed changes (like typos). Strict Mode helps avoid both costly mistakes and unauthorized attempts to reassign variables.

- Default exclusion of unchanged resources from reporting: Unchanged resources will no longer appear in reporting by default, reducing the amount of digging needed to get to actionable insights from each Puppet run.

- Dropping Hiera 3: Removing Hiera 3 trims down the Puppet 8 install for better efficiency and performance.

- If you rely on a Hiera 3 backend, you must convert your backend to Hiera 5.

- Default lazy evaluation of deferred functions: Operate with greater mobility and security when accessing something you don’t want passed through the Puppet infrastructure nodes (e.g., vault secrets).

Puppet 2023.0 is the latest release following 2021.7, now using updated versioning. It’s a backward-compatible release that contains enhancements and resolved issues from our previous major release.

Here are the highlights of Puppet Enterprise 2023.0:

- NIST compliance: Puppet 2023.0 ensures that sensitive information is cleared when a session times out. You can customize the timeout to specify a default value and issue a confirmation message. In this way, you reduce compliance risk for InfoSecOps and administrators. This feature is designed for compliance with National Institute of Standards and Technology (NIST) guidelines.

- Authenticate users in multiple Lightweight Directory Access Protocol (LDAP) domains: Use a prioritized list of LDAP servers to get credentials. In this way, you reduce compliance risk and increase operational efficiency for administrators.

- Streamlined user interface for tasks and plans: Increase observability, throughput, fault tolerance, and operational efficiency with new job and task queue status, task concurrency fine-tuning, default job timeouts, and the capability to stop stalled jobs. View and edit task parameters, targets, and other details. The new functionality is designed to benefit users, operators, and managers.

- Scalability performance improvements to deploy and manage more nodes: Increase operational efficiency and accelerate time to value with new orchestrator task concurrency defaults and improvements. Reporting, database performance, and agent certificate regeneration improvements are provided as well to benefit all users.

Component Updates

- Java 17

Deprecations

Removed primary server platforms:

- CentOS 8

Removed agent platforms:

- CentOS 8

- Debian 9

- Fedora 32

- Fedora 34

- Ubuntu 16.04

Removed patch management platforms:

- Debian 9

- Fedora 34

Puppet Core

Release Notes Explore Puppet Core

Puppet Core 8.18 reinforces the role of Puppet as a stable, vendor-backed foundation for infrastructure automation. This release combines expanded platform coverage with continued security hardening, helping teams reduce operational risk while keeping pace with modern operating systems.

As with all Puppet Core releases, these updates are delivered as tested, hardened builds, removing the need for teams to manage, validate, or certify dependencies on their own.

Back to topA Trusted Automation Foundation, Kept Current

Organizations rely on Puppet Core to provide consistent, reliable automation in environments where security, compliance, and uptime matter. Puppet Core 8.18 continues this approach by strengthening the underlying platform without introducing disruption or forcing changes to established workflows.

This release reflects Puppet’s ongoing commitment to maintaining a clean, supported automation foundation that evolves alongside customer infrastructure.

Expanded macOS Coverage

Puppet Core 8.18 extends support for macOS 15 across both x86_64 and ARM architectures. This allows teams to continue managing macOS systems using the same controls and policies they rely on today.

By delivering macOS agent support in Puppet Core, teams can adopt new operating system versions without the overhead of custom packaging or independent validation.

Security Hardening Embedded in the Platform

This release includes updates to foundational components that help maintain alignment with current security expectations. Rather than requiring customers to track upstream changes or assess individual fixes, Puppet Core bundles these improvements as part of a ongoing release lifecycle.

The result is a platform that continuously strengthens security posture while preserving predictable behavior and operational stability.

Why Upgrade?

Upgrading to Puppet Core 8.18 helps organizations:

- Maintain automation coverage as operating systems evolve.

- Reduce security exposure through CVE remediation and tested updates.

- Rely on a hardened, vendor-backed foundation instead of self-maintained builds.

- Support audit, compliance, and governance objectives with less operational overhead.

Puppet Core 8.18 helps ensure your automation platform remains secure and ready for what comes next.

Learn More

For detailed technical information, including information about CVEs that have been addressed, review the Puppet Core 8.18 release notes.

Release Notes Explore Puppet Core

Puppet Core 8.17 strengthens the security foundation of your infrastructure automation by updating critical components and reducing exposure to known vulnerabilities—helping teams stay compliant, lower risk, and maintain confidence in their automation pipelines without disrupting daytoday operations.

Puppet Core 8.17 includes updates to several foundational dependencies to address recently disclosed security vulnerabilities. These updates help ensure Puppet remains a trusted part of your infrastructure, especially in environments with strict security and compliance requirements.

Key security enhancements include:

- Updated OpenSSL (3.0.19)

Addresses multiple highimpact CVEs, helping protect encrypted communications and sensitive data across your Puppet infrastructure. - Updated Ruby (3.2.10)

Includes security fixes that improve the resilience of the runtime used by Puppet, reducing exposure to known vulnerabilities. - Updated curl (8.18.0)

Resolves several security issues related to data transfer and network communication, improving the overall security posture of agents and services.

These updates are designed to be seamless—delivering improved protection without changing how you manage or operate Puppet.

Cleaner, More Maintainable Platform

Puppet Core 8.17 also removes legacy components that are no longer needed, helping streamline the platform and reduce longterm maintenance risk.

- Ruby API removed from leatherman

The removal of this deprecated API simplifies the codebase and aligns with modern, supported integration patterns. - Brotli and zstd removed from agent curl builds

These compression libraries have been removed from agent curl builds to reduce complexity and attack surface. This change does not impact the functionality of Puppet or PXP agents.

Why Upgrade?

If security, compliance, and platform stability are priorities for your organization, Puppet Core 8.17 provides important updates that help you:

- Reduce exposure to known vulnerabilities

- Stay aligned with modern security expectations

- Maintain a clean, supported automation foundation

Upgrading ensures you continue to receive the latest security improvements and platform updates from Puppet.

Puppet Edge is available now as a new capability for Puppet Core, extending automation, compliance, and governance capabilities beyond servers to encompass network and edge devices.

With available features like Playbook Runner that retains value from prior automation investments, consistent policy management across distributed systems, embedded visibility into device state, and seamless integration with Puppet workflows, organizations can apply the same trusted automation and governance used for servers to edge environments – achieving broader consistency and control across hybrid infrastructure estates.

Release Notes Explore Puppet Core

Puppet Core 8.16.0 is a security-focused release that delivers critical updates to keep your infrastructure automation protected and stable. While no new product features are introduced in this version, it includes important updates to several underlying components—including Thor, curl, REXML, OpenSSL, and the URI gem—to address recently disclosed CVEs.

This release highlights the value of using Puppet’s fully supported, enterprise-grade distribution rather than relying solely on community-maintained open source packages. With Puppet Core, customers benefit from proactive vulnerability monitoring, tested dependency updates, and timely security patches delivered as part of a predictable release cadence.

Key security updates include:

• Updated Thor gem to address CVE-2025-54314

• Updated curl to address CVE-2025-9086 and CVE-2025-10148

• Updated REXML gem for CVE-2025-58767

• Updated OpenSSL to resolve CVE-2025-9230 and CVE-2025-9232

• Patched URI gem for CVE-2025-61594

By staying current with Puppet Core, organizations ensure they remain protected from emerging vulnerabilities and continue receiving reliable, vendor-backed updates throughout the product lifecycle.

Release Notes Explore Puppet Core

September 10, 2025

This release implemented several updates to improve error messages and help prevent security vulnerabilities.

Improved Error Messages

Puppet now reports when binary data is found in the catalog. The error message indicates the manifest file and line number that caused the problem to assist with troubleshooting.

Security Fixes & Hardened Dependencies

libxslt removed – Resolves:

CVE-2025-7424

CVE-2025-7425.

On macOS, nokogiri was replaced with libxml-ruby to remove the libxslt dependency. If you require the nokogiri gem on macOS, please install it separately.

resolv gem shipped with Puppet agent was patched (note: although the vulnerability was patched, the Ruby and resolv gem version numbers did not change) - Resolves:

CVE-2025-24294.

Resolved Issues

Puppet Core 8.14.0 included a version of Ruby compiled against a version of glibc available only on SLES 15 systems using service pack 6 and later. For systems running SLES 15 with earlier service packs this caused ‘glibc not found’ errors. This was resolved by compiling Ruby against an older version of glibc.

Deprecations and removals

Support was removed for two end of life OS versions. The following are no longer available as Puppet Core agents:

- macOS 11 Big Sur (x86_64)

- macOS 12 Monterey (x86_64 and ARM)

For full details of what’s included in this release, see the Puppet Core docs: 8.15 release notes

Release Notes Explore Puppet Core

July 2025

We’re excited to announce the release of Puppet Core 8.14.0! This release strengthens the platform’s enterprise-grade resilience with improved documentation usability and hardened security updates.

Back to topAI-Powered Search for Documentation

The Puppet Core documentation now features an intelligent search experience powered by AI. Ask natural-language questions right from the docs homepage and get answers sourced from both current product documentation and the Perforce knowledge base. It's never been faster to find the answers you need.

Back to top

Back to top

Security Fixes & Hardened Dependencies

Continuing our commitment to security and SLA-backed patching, this release includes critical updates to core components:

- net-imap upgraded to v0.3.9 – Resolves

- CVE-2025-43857

- curl upgraded to v8.14.1 – Resolves:

- CVE-2025-5025

- CVE-2025-4947

- CVE-2025-5399

- libxml2 upgraded to v2.14.5 – Resolves

- CVE-2025-6021 and others

Bug Fixes

We’ve resolved an issue where Ruby would recursively scan the entire lib directory due to a gemspec problem. This fix improves loading behavior for both puppet and facter gems.

Release Notes Explore Puppet Core

June 2025

Released June 2025, Puppet Core 8.13.0 delivers critical security updates and resolves key issues that improve platform reliability across environments, including enhanced behavior on Windows systems.

Back to topSecurity updates in this release

This release includes several important vulnerability patches to strengthen Puppet Core’s hardened foundation:

libxml2 updated to v2.13.8 to address CVE-2025-32415 and CVE-2025-3241

boost updated:

Patched v1.73.0 for AIX, Solaris, and Windows

Updated to v1.80.0 for all other platforms (CVE-2012-2677)

augeas patched to resolve CVE-2025-2588

rapidjson patched (via leatherman) to resolve CVE-2024-39684 and CVE-2024-38517

Bug Fixes

- Resolved Puppet Config Command Failure

Previously, puppet config would fail after a fresh Puppet gem install due to missing parent directories. The expected directory structure is now reliably created on first run. - Windows Service Logon Case Sensitivity Fixed

Puppet now treats Windows service logon accounts as case-insensitive. This prevents unnecessary Puppet service restarts caused by case mismatches in logon names.

Release Notes Explore Puppet Core

April 2025

No organization can afford the risk of unaddressed vulnerabilities — and with Puppet Core, we continue to prioritize reliability, resilience, and security in each release. Puppet Core 8.12.0 addresses 12 security vulnerabilities identified in third-party libraries, including seven with “critical” or “high severity” CVSS scores.

These vulnerabilities, which affect both Open Source Puppet and Puppet Core, present a range of risks, including credential leaks, crashes, and system instability due to memory handling and overflow issues. Additionally, this release includes enhanced error messaging, reducing confusion for custom Hiera lookups.

Back to topSecurity fixes in this release

Updated Third Party Components to Mitigate 12 Vulnerabilities

- Upgraded Ruby to version 3.2.8 to address:

- CVE-2025-27219

- CVE-2025-27220

- CVE-2025-27221

- Upgraded OpenSSL to version 3.0.16 to address:

- CVE-2024-13176

- CVE-2025-0306

- Upgraded Curl to version 8.12.0 to address:

- CVE-2025-0725

- CVE-2025-0665

- CVE-2025-0167

- Upgraded Libxml2 to version 2.13.6 to address:

- CVE-2025-24928

- CVE-2024-56171

- Upgraded Libxslt to version 1.1.43 to address:

- CVE-2024-55549

- CVE-2025-24855

Default Server Trust Updated

- The server setting is no longer defaulted to

puppet, preventing unintended trust relationships when running as an unprivileged user.- Addresses CVE-2024-9128

New in this release

Enhanced Error Messaging for Custom Backend Hiera Lookups

- Say goodbye to cryptic error messages! When using custom backends in Hiera, missing modules now trigger detailed errors identifying the affected module, enabling faster diagnosis and resolution.

Deprecated in this release

Puppet Core 7 EOL

- Puppet 7 is officially end of life. Upgrade to Puppet 8 to maintain support and access to the latest updates.

RELEASE NOTES LEARN MORE ABOUT PUPPET CORE

February 20, 2025

Digital threats continue to grow in frequency and complexity, and risk is growing across every phase of software development. Organizations need to adopt applications that are created and supported by trusted, reputable vendors offering SLAs that meet internal and external compliance requirements.

Back to topPuppet Core: Vendor-Backed, Secure & Stable

Perforce is excited to announce Puppet Core, an enterprise-ready platform built on the foundation of open source Puppet. Puppet Core delivers reliable, stable, and secure vendor-backed software with guaranteed SLAs to meet internal and external requirements for compliance.

Puppet Core is developed, maintained, and supported by Perforce, a trusted vendor for over 30 years to enterprise organizations around the globe. This means increased confidence in your software supply chain and not having to navigate the complexities and resource drain of building, maintaining, and certifying builds on your own.

Back to topPuppet Core is available immediately with commercial licenses for organizations needing more than 25 nodes.

Included in Puppet Core:

Puppet Core software builds are rigorously tested to the highest security standards, delivering performance and continuous operational resilience for critical infrastructure and applications.

- Hardened Puppet binaries housed in a secure repository

- Guaranteed SLAs for high and critical severity CVEs

- Core essential agents, including the latest downstream version

- Requests for fixes and vulnerabilities

- Training engagement with Certified Puppet Engineers, including guidance on Puppet Core resources and an introduction to Security Compliance Enforcement

- Security Compliance Enforcement, a premium feature for implementing and continuously maintaining alignment with CIS Benchmarks and DISA STIGs using policy as code

Always-On Audit Readiness

Puppet Core provides vendor-guaranteed SLAs, giving you regulatory compliance and "always-on" audit readiness. The Security Compliance Enforcement modules for Windows and Linux are pre-built modules maintained by Puppet, providing peace of mind that your infrastructure is in a continuous desired state and hardened against industry-recognized security baselines.

Back to topNew in this release:

- Added support for the following operating systems for Developer users:

- Fedora 41

- macOS 15

Puppet Edge

September 23, 2025

Extending the Power of Puppet to the Edge

We are excited to announce Puppet Edge, a new capability for Puppet Core and the Puppet Enterprise platform. Puppet Edge extends enterprise-grade automation, governance, and compliance to network peripherals and edge devices with the same trust and consistency you rely on for servers, VMs, and cloud. Puppet Edge preserves prior automation investments, reduces complexity, and enables secure, scalable infrastructure management across your entire hybrid infrastructure estate using a single, unified platform.

Included in Puppet Edge:

- Unified Automation – Extend Puppet automation to firewalls, network peripherals, POS systems, and other edge devices using a single, unified solution.

- Seamless Integration – Works with Puppet Core and the Puppet Enterprise platform to provide enterprise-grade governance across your entire hybrid infrastructure estate.

- Flexible Agent + Agentless Design – Utilize NETCONF and YANG, SSH, and WinRM to manage non-OS devices while continuing to benefit from the power of Puppet Agents for Linux and Windows servers.

- Consistent Policy Management – Apply configuration, security, and compliance standards across hybrid and edge environments.

- Playbook Runner premium module – Execute existing playbooks and ad-hoc commands from tools like Ansible® as tasks directly within Puppet, without additional orchestration tools or license costs.

- EdgeOps premium module – provides tools and content samples for managing Puppet Edge network devices with the Puppet Enterprise platform.

Puppet Enterprise Advanced customers also get access to:

- Workflows – enables imperative orchestration of edge devices. Workflows are designed to help simplify development and execution of IT operations automation. Power users can build and share complex workflows, allowing teammates to execute sophisticated orchestration without deep Puppet knowledge.

- Infra Assistant: code assist – enabling you to use AI to generate content to help manage your devices.

Contact the Puppet sales team for information about licensing network and edge devices.

Security Compliance Management (formerly Puppet Comply)

SCM Release Notes Demo Puppet Enterprise Advanced

We are excited to announce that Security Compliance Management (SCM) 3.6.0 is now available.

SCM is included with the Puppet Enterprise platform and helps teams continuously assess systems against recognized security benchmarks to reduce risk and prove compliance. It replaces manual checks with consistent, auditable insight into where environments meet policy and where action is needed.

What is New in Security Compliance Management 3.6.0

This release strengthens how assessments are run, expands the scope of benchmarks you can evaluate against, and closes several security gaps, making it easier to defend audit readiness while maintaining a hardened operational posture.

Broader Compliance Coverage Without Extra Complexity

Security Compliance Management 3.6.0 now includes the full set of CIS-CAT Pro Assessor benchmarks, including those not formally supported by SCM.

Why this matters:

- Teams can assess more systems and configurations against CIS guidance.

- Security and compliance leaders gain greater visibility into gaps that previously went unmeasured.

- Audit preparation becomes less about exceptions and more about evidence.

This change reduces blind spots and gives organizations more confidence that their compliance posture reflects real world environments.

Safer Execution That Lowers Operational Risk

This release changes how temporary files and installers are handled, removing reliance on the /tmp directory and automatically cleaning up outdated CIS-CAT Pro Assessors.

The outcome:

- A smaller attack surface during scans and upgrades.

- Less risk of leftover artifacts that could be misused or flagged during audits.

- Cleaner, more predictable runtime behavior in locked down or regulated environments.

For teams operating under strict security controls, these improvements reduce friction with internal security reviews and platform hardening standards.

Stay Current With Modern Platforms and Benchmarks

Support is now included for newer operating systems and refreshed benchmarks across Windows, Linux, and macOS.

This ensures:

- Newer platforms like Windows Server 2025, RHEL 10, and the latest macOS releases can be assessed as they enter production.

- Benchmark updates reflect current CIS guidance, not outdated controls.

- Compliance tooling keeps pace with infrastructure modernization efforts.

This alignment helps organizations move forward with upgrades while maintaining compliance.

Security Hardening Built Into the Platform

Multiple security fixes are included in this release, including changes that eliminate the possibility of remote execution during scans and address several CVEs across dependencies.

The business impact:

- Reduced exposure from known vulnerabilities.

- Fewer findings from internal security reviews.

- Greater trust in the compliance tooling itself as part of the security stack.

Why Upgrade to 3.6.0

Upgrade to Security Compliance Management 3.6.0 if you want to:

- Reduce audit and compliance blind spots with broader benchmark coverage.

- Lower operational and security risk during assessments.

- Maintain confidence as you adopt newer operating systems and platforms.

- Ensure your compliance tooling meets the same security standards you expect from the rest of your environment.

This release is about strengthening trust in your compliance process, from scan execution to audit reporting.

August 5th 2025

Perforce Puppet announces (effective February 5, 2026), the end of life (EOL) for:

- Comply 2.x, the legacy version of Security Compliance Management (SCM).

Future development will now focus exclusively on SCM 3.x, which offers greater reliability, reduced dependencies, and ongoing innovation.

Customers currently using older versions are encouraged to migrate to the latest releases to avoid disruption and ensure continued support. Active maintenance and migration assistance will be provided up to the EOL date, with an option for extended support available as a premium offering.

3.5 RELEASE NOTES 2.25.0 RELEASE NOTES

DEMO PUPPET ENTERPRISE ADVANCED

July 11, 2025

We are excited to announce that Security Compliance Management (SCM) 3.5.0 and 2.25.0 are now available.

SCM is included with PE and PE Advanced to enable organizations to quickly assess infrastructure configurations against CIS Benchmarks and DISA STIGs using the integrated CIS-CAT Pro Assessor from the Center for Internet Security (CIS).

Back to topNew in this release:

Flexible Java management

The Comply module used for SCM now includes the option to use a locally installed Java runtime, instead of the Java bundled with the CIS-CAT Pro Assessor.

- Toggle between using a compatible local Java installation or the bundled Java.

- When the locally-installed Java version is specified, the bundled Java is automatically removed to streamline your environment.

- If you choose to revert to the bundled Java, it is automatically reinstalled.

This enhancement is ideal for customers with specific Java version requirements and those looking to align with internal security and compliance policies.

Secrets management for Podman installs

Starting in version 3.5.0, Podman-based installations use a secrets management mechanism to handle passwords and other sensitive information.

CIS-CAT Pro Assessor updates

SCM 3.5.0 and 2.25.0 both include the CIS-CAT Pro Assessor v4.55.0, featuring important security fixes and numerous updates to operating system benchmarks.

Updated benchmarks:

- CIS Ubuntu Linux 24.04 LTS STIG Benchmark v1.0.0 (new)

- CIS Microsoft Windows Server 2022 Benchmark v4.0.0 (updated from v3.0.0)

- CIS Microsoft Windows 10 Enterprise Benchmark v4.0.0 (updated from v3.0.0)

- CIS Microsoft Windows 10 Stand-alone Benchmark v4.0.0 (updated from v3.0.0)

- CIS Microsoft Windows 11 Stand-alone Benchmark v4.0.0 (updated from v3.0.0)

- CIS Microsoft Windows Server 2019 Benchmark v4.0.0 (updated from v3.0.1)

- CIS Apple macOS 13.0 Ventura Benchmark v3.1.0 (updated from v3.0.0)

- CIS Microsoft Windows Server 2022 Stand-alone Benchmark v1.0.0 (new)

- CIS Red Hat Enterprise Linux 9 STIG Benchmark v1.0.0 (new)

- CIS Apple macOS 14.0 Sonoma Benchmark v2.1.0 (updated from v2.0.0)

- CIS Apple macOS 15.0 Sequoia Benchmark v1.1.0 (new)

- CIS SUSE Linux Enterprise 12 Benchmark v3.2.1 (updated from v3.2.0)

Removed benchmarks:

- CIS Apple macOS 11.0 Big Sur Benchmark v4.0.0

- CIS Oracle Linux 7 Benchmark v4.0.0

- CIS Red Hat Enterprise Linux 7 Benchmark v4.0.0

- CIS Red Hat Enterprise Linux 7 STIG Benchmark v2.0.0

- CIS CentOS Linux 7 Benchmark v4.0.0

- CIS Debian Linux 10 Benchmark v2.0.0

- CIS Ubuntu Linux 18.04 LTS Benchmark v2.2.0

RELEASE NOTES

SEE PUPPET ENTERPRISE ADVANCED IN ACTION

April 30, 2025

We’re excited to announce the release of Security Compliance Management (SCM) versions 3.4.0 and 2.24.0.

SCM is included with Puppet Enterprise (PE) and PE Advanced and enables organizations to quickly assess infrastructure configurations against CIS Benchmarks and DISA STIGs using the integrated CIS-CAT Pro Assessor from the Center for Internet Security (CIS).

Both releases have been updated with the latest version of the CIS-CAT Pro Assessor, enabling Puppet Enterprise customers to monitor compliance with the all operating system benchmarks supported by the Center for Internet Security (CIS).

Back to top

New in this release:

CIS-CAT Pro Assessor updates

SCM 3.4.0 and 2.24.0 include version 4.52.0 of the CIS-CAT Pro Assessor.

Updated benchmarks

- Apple macOS 12.0 Monterey v4.0.0

- Apple macOS 12.0 Monterey Cloud-tailored v1.1.0

- Apple macOS 14.0 Sonoma Cloud-tailored v1.1.0

- Microsoft Windows 11 Enterprise v4.0.0

- Microsoft Windows Server 2019 STIG v3.0.0

- Microsoft Windows Server 2022 STIG v2.0.0

- Red Hat 8 STIG v2.0.0

- SUSE Linux Enterprise 15 v2.0.1

- Ubuntu Linux 20.04 LTS v3.0.0

Removed benchmarks:

- CIS Microsoft Windows Server 2012 (non-R2) Benchmark v3.0.0

- Microsoft Windows Server 2012 R2 Benchmark v3.0.0

Java run-time environment update

The JRE included in the assessor bundle is updated to Amazon Corretto v8.442.06.1

Platform support for SCM

SCM can now be installed on the following operating systems:

- AlmaLinux 9

- Rocky Linux 9

- Oracle Linux 9

- Debian 12

Resolved issues

3.4.0 and 2.24.0

- Fixed an issue affecting the export service.

- Fixed an issue where scans showed success but reported 0% results due to an OS mismatch.

- Security fixes for a range of CVEs.

3.4.0 only

- Fixed an issue where the offline install bundle did not properly include the .modules directory.

RELEASE NOTES DEMO PUPPET ENTERPRISE

Aug. 16, 2024

This release of Security Compliance Management includes an enhancement that improves reliability, accuracy, and scalability of scheduled scans when onboarding new nodes to Puppet. Additionally, an update to the user experience improves flexibility by permitting the modification of key settings (including inventory refresh intervals and data retention settings) anytime versus only during installation, allowing for more efficient management.

This release also includes CIS Benchmark updates, fixes for a known issue, and an update to address a critical vulnerability in an open-source identity and access management (IAM) tool leveraged by Puppet.

(Release notes for the functionally equivalent Puppet Comply 2.22.0 can be found here.)

Back to topNew in this release:

- Dynamically target nodes for scheduled scans: This timesaving feature removes the manual effort of editing scheduled scans whenever new nodes are onboarded. Now, when scheduling scans in the Security Compliance Management Console, customers can target nodes dynamically by specifying the node groups to scan, so that scans run automatically on all nodes that belong to the specified node groups at the scheduled times.

- Configure the inventory refresh and data retention settings: This enhancement gives more flexibility and control to our customers. Previously, inventory refresh intervals and data retention settings were configurable only during the installation process. Now, to improve user experience, the Settings page in the Security Compliance Management Console allows customers to adjust these two settings at any time.

- Inclusion of CIS-CAT® Pro Assessor v4.43.0 (released July 11, 2024).

- Security Compliance Management is regularly updated with the latest version of the CIS-CAT Pro Assessor, which assesses system compliance with CIS Benchmarks to generate actionable insights.

- Added support for the latest CIS Benchmarks for the following operating systems:

- Apple macOS 12.0 Monterey Benchmark v3.1.0

- Apple macOS 13.0 Ventura Benchmark v2.1.0

- Microsoft Windows Server 2019 Stand-alone v2.0.0

- Oracle Linux 9 Benchmark v2.0.0

- Red Hat Enterprise Linux (RHEL) 9 Benchmark v2.0.0

Issues resolved in this release:

- Fixed a bug that could prevent node selection when creating an ad hoc desired compliance scan.

Security fixes in this release:

- Resolved the following CVE:

- Upgraded KeyCloak to v25 to address CVE-2023-2976.

RELEASE NOTES DEMO PUPPET ENTERPRISE

June 27, 2024

With this release of Security Compliance Management (formerly Puppet Comply), Puppet Enterprise users can now set their desired compliance defaults for each operating system (OS), saving valuable time when adding new nodes to a common OS.

We’ve added instructions for performing data backups and a guided tutorial for conducting ad hoc scans using the REST API available with Security Compliance Management. This release also includes CIS Benchmark updates, fixes for several known issues, and security updates.

Back to topNew in this release:

- Desired compliance can be set for operating systems. Any node added to that OS will automatically be assigned the default benchmark and profile you set for that OS.

- Added instructions on data backup: Security Compliance Management now includes instructions for backing up your data to make it easier to restore systems in a disaster recovery scenario.

- REST API tutorial: A new tutorial guides users through running an ad hoc scan in Security Compliance Management using the REST API added in 2.18.0.

- journald logging instructions: Added instructions on how to access relevant log files with the journald logging driver.

- Inclusion of CIS-CAT® Pro Assessor v4.42.0 (released May 30, 2024).

- Security Compliance Management is regularly updated with the latest version of the CIS-CAT Pro Assessor, which assesses system compliance with CIS Benchmarks to generate actionable insights.

- Added support for the latest CIS Benchmarks for the following operating systems:

- Debian Linux 12 Benchmark v1.0.1

- Microsoft Windows 11 Stand-alone Benchmark v3.0.0

- Microsoft Windows Server 2019 Benchmark v3.0.1

Issues resolved in this release:

- Fixed an issue that could prevent existing scheduled scans from running after migrating from Security Compliance Management version 2.x to 3.x.

- Fixed an issue where the search box on the exceptions page wouldn’t accept input.

- Fixed an issue that was causing macOS nodes to be listed as Darwin on the Inventory page, which prevented the desired compliance from being set for those nodes.

Security fixes in this release:

- Resolved the following CVEs:

- Updated braces to address CVE-2024-4068

- Updated KeyCloak to address CVE-2024-2961, CVE-2024-33599, CVE-2024-2700, CVE-2024-1132, CVE-2024-1249, CVE-2024-2419, CVE-2024-3656, GHSA-69fp-7c8p-crjr

- Updated oauth2-proxy to address CVE-2023-5363

RELEASE NOTES DEMO PUPPET ENTERPRISE

May 7, 2024