Troubleshooting

Use this guide to troubleshoot issues with your Continuous Delivery for Puppet Enterprise (PE) installation.

Stopping and restarting the Continuous Delivery for PE container

Continuous Delivery for PE is run as a Docker container, so stopping and restarting the container is an appropriate first step when troubleshooting.

If you installed Continuous Delivery for PE with the cd4pe module:

- Stop the container:

service docker-cd4pe stop - Restart the container:

service docker-cd4pe start

If you installed Continuous Delivery for PE from the PE console and installed the cd4pe module to automate

upgrades:

- Stop the container:

service docker-cd4pe stop - Restart the container:

service docker-cd4pe start

cd4pe module:- Stop the container:

docker stop <CONTAINER_NAME> - Restart the container:

docker run <CONTAINER_NAME>, passing in any applicable environment variablesNote: To print the name of the container, rundocker ps.

Accessing the Continuous Delivery for PE logs

Because Continuous Delivery for PE is run as a container in Docker, the software's logs are housed in Docker.

docker logs <CONTAINER_NAME>. docker ps. If you installed the software using the cd4pe

module, run docker logs cd4pe.

PE component errors in Docker logs

The Docker logs include errors for both Continuous Delivery for PE and for the numerous PE components used by the software. It’s important to realize that an error in the Continuous Delivery for PE Docker log may actually indicate an issue with Code Manager, r10k, or another component running outside the Docker container.

Module Deployment failed for PEModuleDeploymentEnvironment[nodeGroupBranch=cd4pe_lab,

nodeGroupId=a923c759-3aa3-43ce-968a-f1352691ca02, nodeGroupName=Lab environment,

peCredentialsId=PuppetEnterpriseCredentialsId[domain=d3, name=lab-MoM],

pipelineDestinationId=null, targetControlRepo=null, targetControlRepoBranchName=null,

targetControlRepoHost=null, targetControlRepoId=null].

Puppet Code Deploy failure: Errors while deploying environment 'cd4pe_lab' (exit code: 1):

ERROR -> Unable to determine current branches for Git source 'puppet' (/etc/puppetlabs/code-staging/environments)

Original exception: malformed URL 'ssh://git@bitbucket.org:mycompany/control_lab.git'

at /opt/puppetlabs/server/data/code-manager/worker-caches/deploy-pool-3/ssh---git@bitbucket.org-mycompany-control_lab.gitFor help resolving issues with PE components, see the Puppet Enterprise troubleshooting documentation.

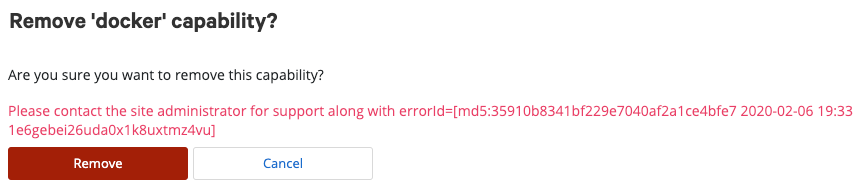

Error IDs in web UI error messages

Errors of this kind are shown without additional detail for security purposes. Users with root access to the Continuous Delivery for PE host system can search the Docker log for the error ID to learn more.

Duplicate job logs after reinstall

A job's log is housed in object storage after the job is complete. If you reinstall Continuous Delivery for PE but reuse the same object storage without clearing it, you might notice logs for multiple jobs with the same job number, or job logs already present when a new job has just started.

To remove duplicate job logs and prevent their creation, make sure you clear both the object storage and the database when reinstalling Continuous Delivery for PE.

Name resolution

Inside its Docker container, Continuous Delivery for PE relies on its host's DNS system for name resolution. Many

can't reach and timeout

connecting errors reported in the Docker logs

are actually DNS lookup failures.

- Set up a DNS server for Continuous Delivery for PE that includes all the required hosts.

- Use the

cd4pe_docker_extra_paramsflag to add hostnames to the container's/etc/hostsfile. The value will be an array of strings in the format--add-host <HOSTNAME>:<IP_ADDRESS>. For example:

See Advanced configuration options for more information.["--add-host pe-201920-master.mycompany.vlan:10.234.3.142", "--add-host pe-201920-agent.mycompany.vlan:10.234.3.110", "--add-host gitlab.mycompany.vlan:10.32.3.110"]

If you need a temporary workaround to solve a name resolution issue, you can edit the Docker container's /etc/hosts file directly. However, these changes will be erased whenever

the Docker container restarts, so this method should not

be used as a permanent solution.

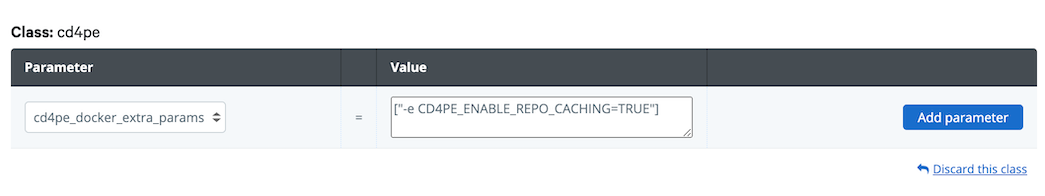

Improving job performance by caching Git repositories

Users with large Git repositories can enable Git repository caching in order to improve job performance. By default, repository caching is disabled.

CD4PE_ENABLE_REPO_CACHING=TRUE as a value for the cd4pe_docker_extra_params parameter on the cd4pe class.

["--add-host

gitlab.puppetdebug.vlan:10.32.47.33","-v

/etc/puppetlabs/cd4pe/config:/config","--env-file

/etc/puppetlabs/cd4pe/env-extra","-e

CD4PE_LDAP_GROUP_SEARCH_SIZE_LIMIT=250"]

Including the .git directory in cached

repositories

Beginning with version 3.12.0, the .git directory is

automatically omitted when copying cached Git

repositories to job hardware. This means that the job cannot perform Git actions on the code. If needed, you can adjust

this setting so that the .git directory is included

in the cached repository.

To include the .git directory in copies of cached Git repositories sent to job hardware, set CD4PE_INCLUDE_GIT_HISTORY_FOR_CD4PE_JOBS=TRUE as a value

for the cd4pe_docker_extra_params parameter on the

cd4pe class.

Adjusting the timeout period for jobs

In some circumstances, such as when working with large Git repositories, you may need to adjust the length of the job timeout period. Use the environment variables in this section to customize your job timeout period.

cd4pe_docker_extra_params parameter on the cd4pe class. ["--add-host

gitlab.puppetdebug.vlan:10.32.47.33","-v

/etc/puppetlabs/cd4pe/config:/config","--env-file

/etc/puppetlabs/cd4pe/env-extra","-e

CD4PE_LDAP_GROUP_SEARCH_SIZE_LIMIT=250"]

| Variable | Description | Default value |

|---|---|---|

CD4PE_JOB_GLOBAL_TIMEOUT_MINUTES |

Sets the default timeout period for jobs. Once this timeout period elapses, the job fails and a timeout message is printed to the log. | 12 (minutes) |

CD4PE_JOB_HTTP_READ_TIMEOUT_MINUTES |

Sets the timeout period for a job connecting to an endpoint. | 11 (minutes) |

CD4PE_REPO_CACHE_RETRIEVAL_TIMEOUT_MINUTES |

Only used if Git repository caching is enabled. Sets the timeout period for a thread attempting to access a cached Git repository. | 10 (minutes) |

CD4PE_BOLT_PCP_READ_TIMEOUT_SEC |

Sets the Bolt PCP read timeout

period.

Note: Jobs cannot proceed while file sync is running. If a

file sync operation is not completed before the Bolt PCP read timeout period

elapses, the job fails. Increase the Bolt PCP read timeout period to

prevent these job failures.

|

60 (seconds) |

In order to ensure that all useful error messages are printed to the logs, make sure that

the value of CD4PE_JOB_GLOBAL_TIMEOUT_MINUTES is larger

than the value of CD4PE_JOB_HTTP_READ_TIMEOUT_MINUTES,

and that both are larger than the value of CD4PE_REPO_CACHE_RETRIEVAL_TIMEOUT_MINUTES.